PropTech companies, at their core, are engines fueled by data. The intricate web of property listings, comparable sales, rental rates, occupancy trends, and investment returns forms the bedrock upon which every innovative product and feature is built. Consequently, when founders embark on scaling their ventures, the immediate and often instinctive response is to construct their data pipelines internally, to meticulously own the entire technology stack and exert absolute control over incoming information.

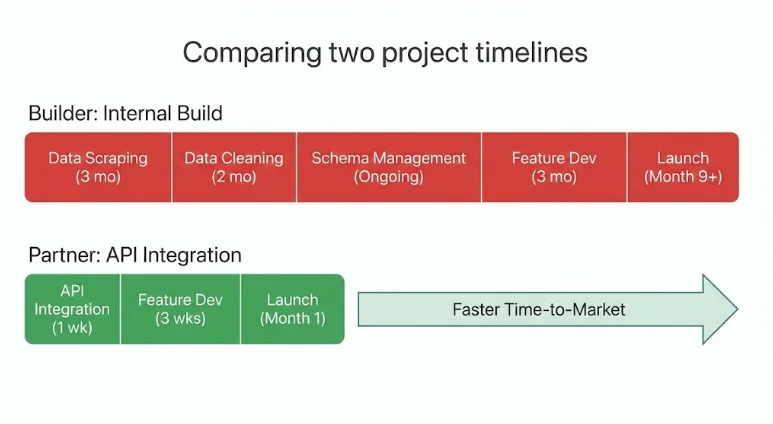

This initial impulse to build from scratch and maintain internal control can appear strategically sound. Ownership suggests defensibility, and building in-house seems to promise a long-term competitive advantage. However, the practical realities of the PropTech landscape frequently reveal a different narrative. Many teams discover that internal data infrastructure projects consume months of valuable engineering time before delivering any tangible output. The inherent fragility of web scraping, constant shifts in data schemas, and the complex process of cleaning and normalizing data from disparate sources can significantly slow product roadmaps. As infrastructure quietly expands, core product development languishes.

Meanwhile, competitors who have strategically integrated mature, external data systems are often able to ship features and iterate at a considerably faster pace. The PropTech companies that are achieving the most efficient and robust scaling today are not attempting to build every dataset from the ground up. Instead, they are making discerning decisions about which aspects of their operations truly define their unique value proposition and are choosing to partner for the rest. In the dynamic world of modern PropTech, the true differentiator is increasingly found not in control, but in leverage.

Understanding Strategic Data Partnerships in PropTech

A strategic data partnership in PropTech can be defined as a long-term integration with a specialized data provider that delivers production-ready real estate data via APIs. This approach contrasts sharply with the resource-intensive endeavor of building and maintaining internal data pipelines. Instead of dedicating months to scraping, cleaning, and continuously updating fragmented data sources, PropTech teams can integrate meticulously structured datasets that are perpetually refreshed and immediately ready for product integration. This paradigm shift allows companies to treat data as a utility – an essential infrastructure component – rather than a primary source of differentiation.

In practice, these strategic partnerships empower PropTech teams in several key areas:

- Accelerated Product Development: By offloading the complexity of data acquisition and management, engineering teams can redirect their focus towards developing core product features that directly impact user experience and revenue.

- Reduced Operational Overhead: The ongoing costs and complexities associated with maintaining internal data infrastructure, including scraper maintenance, schema adaptation, and data validation, are significantly mitigated.

- Enhanced Data Quality and Reliability: Specialized data providers invest heavily in data accuracy, completeness, and timeliness, offering a level of reliability that is often challenging and prohibitively expensive for individual PropTech companies to achieve internally.

- Faster Market Entry and Expansion: Access to comprehensive, pre-packaged data allows companies to launch new products or expand into new markets more rapidly, capitalizing on emerging opportunities.

It is crucial to understand that a strategic data partnership is not about outsourcing the core of a company’s product or intellectual property. Rather, it is about outsourcing the management of commoditized infrastructure, thereby enabling internal teams to concentrate their efforts and resources on developing what truly sets them apart in the market.

The Strategic Imperative: Build vs. Partner

Every PropTech founder, at some point in their growth trajectory, confronts a pivotal decision: should they build a particular capability internally, or should they seek a strategic partner? The answer to this question has profound implications, influencing not only the technical architecture of their product but also their speed to market, their operational burn rate, and their long-term scalability.

When Building Internally Makes Strategic Sense

Building a data capability internally is most judicious when that capability fundamentally defines the company’s core differentiation. If a specific feature or data set is central to the organization’s unique value proposition, then owning and controlling that logic can significantly strengthen its defensibility against competitors. This often applies to proprietary underwriting algorithms, unique scoring models that leverage proprietary methodologies, or highly specialized workflow automation tools that are difficult for others to replicate.

Internal builds are particularly justified when they:

- Form the Core of the Unique Selling Proposition: The feature is not merely a component but the very essence of what the company offers.

- Involve Proprietary Intellectual Property: The underlying logic or data manipulation techniques are a trade secret or patentable.

- Require Deep, Granular Control: The nature of the data or its processing demands an exceptionally high degree of customization and oversight that external providers cannot reasonably offer.

In these scenarios, the feature or data capability effectively is the company, making internal ownership a strategic necessity.

When Partnering Becomes the Strategic Choice

Partnerships become a more advantageous strategy when the data layer, while foundational, is not the primary source of differentiation. Real estate datasets are inherently complex, requiring continuous aggregation from numerous sources, meticulous cleaning, rigorous validation, and daily updates. Attempting to reconstruct and maintain this intricate infrastructure internally often leads to significant delays in core product development.

Partnering with specialized data providers emerges as a strategic imperative when:

- The Data is Commoditized but Essential: The data is widely available or can be sourced from multiple providers, and its value lies in its accessibility and integration rather than its unique origin.

- Data Maintenance is Resource-Intensive and Non-Differentiating: The effort required to keep data current, accurate, and compliant consumes engineering bandwidth that could be better allocated to product innovation.

- Speed to Market is Critical: Launching a product or feature quickly is essential to capture market share or respond to competitive pressures.

- Achieving Broad Coverage is Necessary: Reaching nationwide or global coverage with reliable data is a prerequisite for scaling, but building this infrastructure from scratch is prohibitively expensive and time-consuming.

In these contexts, integration is not merely a shortcut; it represents a strategic and efficient allocation of company resources. The most effective PropTech companies are those that meticulously build what makes them distinct and seamlessly integrate what enables them to scale efficiently.

Strategic Data Partnerships as a Capital Allocation Strategy

The decision to pursue a strategic data partnership is fundamentally a capital allocation decision, not merely a technical one. In the crucial early and growth stages of PropTech companies, engineering time represents one of the most valuable and scarce resources. Every month that an engineering team dedicates to building and maintaining internal data pipelines is a month that cannot be spent enhancing the product experience, developing innovative automation features, or solidifying the company’s core differentiation.

The sheer scale of reconstructing nationwide real estate data infrastructure internally is immense. It involves aggregating millions of MLS records, harmonizing diverse property attributes, accurately modeling rental income across various property types and markets, cleaning and validating short-term rental signals, rigorously verifying occupancy trends, and calculating complex ROI metrics – all of which must be refreshed daily. This work is inherently complex, perpetually ongoing, and largely invisible to the end-users of a PropTech product.

When companies opt to integrate mature real estate APIs from specialized providers, they gain immediate access to a wealth of meticulously curated and continuously updated information. This typically includes:

- Comprehensive Property Data: Detailed information on millions of properties, including features, historical sales, and ownership records.

- Up-to-Date Listing Information: Real-time access to active and recently sold residential and commercial listings.

- Accurate Rental Comps: Reliable data on comparable rental rates in specific neighborhoods and property classes.

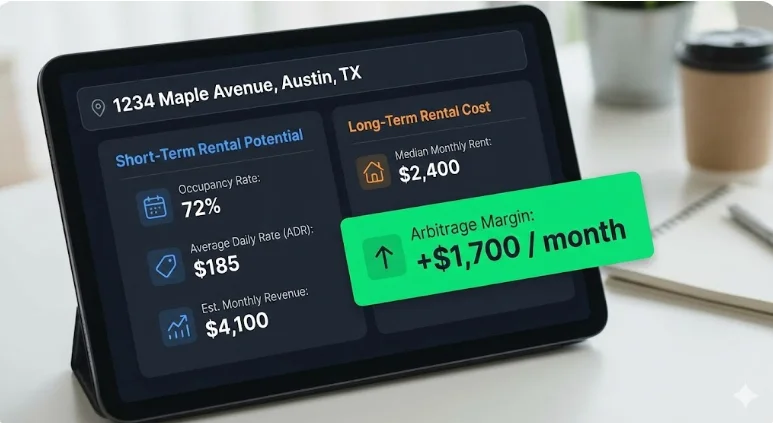

- Short-Term Rental Performance Metrics: Data on occupancy rates, average daily rates (ADR), and revenue per available room (RevPAR) for short-term rentals.

- Long-Term Rental Estimates: Projections for potential rental income for long-term leases.

- Investment Analytics: Pre-calculated metrics such as cash flow, capitalization rates (Cap Rates), and potential return on investment (ROI).

All of this critical data is delivered through structured, machine-readable endpoints, designed for seamless integration into PropTech applications. The result is an immediate acceleration of development cycles. Teams can significantly reduce their infrastructure overhead, preserve invaluable engineering focus, and accelerate their progress towards launching revenue-generating features. For venture-backed PropTech companies, in particular, strategic partnerships are not about relinquishing capability but about strategically directing capital towards innovation, allowing specialized data providers to handle the complex and resource-intensive foundational work.

Applied Examples Across PropTech Verticals

The strategic value of these data partnerships becomes even more apparent when examined across various PropTech categories. While the specific business models may differ, the fundamental challenge of scaling often remains the same: transforming fragmented real estate data into reliable, production-ready intelligence.

Marketplaces

Challenge: Property marketplaces thrive on accurate and comprehensive listings, up-to-date pricing history, granular neighborhood benchmarks, reliable rental comparables, and robust investment indicators across a multitude of cities.

Risk of Internal Build: In-house ingestion and normalization of MLS data, coupled with sophisticated performance modeling, necessitate constant updates and multi-source validation. Expanding market coverage incrementally can severely slow down growth and introduce inconsistencies in data quality.

Strategic Partnership: By integrating structured property data endpoints, powerful search APIs, and detailed neighborhood analytics with nationwide coverage, marketplaces can bypass the extensive infrastructure development that does not directly contribute to their core differentiation.

Scaling Outcome: Engineering resources are then freed to concentrate on enhancing marketplace liquidity, optimizing user experience, and streamlining transaction workflows – the true drivers of marketplace value.

Example: Rapid Deployment from Prototype to Production

A nascent PropTech startup developing a specialized underwriting tool for short-term rentals (STRs) leveraged an existing real estate API to launch production-grade analytics in under two weeks. Instead of investing months in normalizing listings, rental performance data, and ROI calculations, the team was able to dedicate their efforts to refining the user interface, optimizing workflows, and enhancing the deal evaluation logic. This agility allowed them to rapidly test product-market fit, onboard early adopters, and iterate on their offering without the immediate need for a dedicated data engineering team.

CRMs & Deal Management Platforms

Challenge: Users of Customer Relationship Management (CRM) platforms frequently need to exit the system to validate financial assumptions, leading to fragmented workflows and reduced platform stickiness.

Risk of Internal Build: Developing in-house underwriting layers requires the complex integration of diverse property data, short-term rental metrics, long-term rental estimates, historical performance arrays, and detailed ROI calculations. Each of these data sets demands continuous cleaning and daily updates.

Strategic Partnership: Embedding unified real estate APIs directly within the CRM platform enables deal validation to occur seamlessly within the existing workflow.

Scaling Outcome: This integration significantly reduces the time required for decision-making, improves user retention, and elevates the CRM from a simple workflow tool to a powerful decision-making engine.

AI Underwriting & Analytics Tools

Challenge: Machine learning systems are fundamentally dependent on structured, time-series datasets that possess consistent schemas and reliable input parameters.

Risk of Internal Build: Data obtained through scraping often lacks proper normalization, sample size validation, or fallback logic, leading to inconsistent inputs that generate unstable and unreliable outputs.

Strategic Partnership: Integrating APIs that provide comprehensive historical performance data (e.g., 36 months of monthly performance), built-in investment metrics, and statistical confidence indicators facilitates cleaner model training and accelerates deployment cycles.

Scaling Outcome: AI-powered tools can transition from prototype to production environments more rapidly, with a significantly reduced risk of modeling inaccuracies.

Investment & Portfolio Platforms

Challenge: Institutional-grade underwriting necessitates access to detailed data on occupancy rates, Average Daily Rates (ADR), Revenue Per Available Room (RevPAR), rental comps, expense modeling, and comprehensive ROI projections across multiple markets. Furthermore, identifying highly profitable rental arbitrage opportunities requires the simultaneous evaluation of both short-term and long-term rental potentials.

Risk of Internal Build: Reconstructing nationwide data coverage with daily refresh cycles is an extraordinarily capital-intensive and slow process. Developing separate data pipelines for short-term and long-term rental data effectively doubles the infrastructure burden.

Strategic Partnership: Leveraging harmonized datasets with unified schemas and pre-modeled financial indicators eliminates significant infrastructure drag. Partnering for an API that provides access to both STR and LTR data unlocks immediate arbitrage analysis capabilities without the need for managing multiple, competing data subscriptions.

Scaling Outcome: These platforms can deliver institutional-grade analytics and sophisticated arbitrage modeling capabilities without incurring the associated institutional overhead, thereby accelerating market expansion.

The Competitive Advantage of Speed

Real estate markets are characterized by their dynamism and do not patiently await the completion of product roadmaps. Short-term rental revenues can fluctuate dramatically, often by 20% to 50% between peak and off-peak seasons. Supply levels in any given market are subject to constant shifts as new listings emerge. Regulatory environments are also prone to evolution, and changes in interest rates can fundamentally alter underwriting assumptions almost overnight.

In such an environment, speed is not merely a cosmetic advantage; it is a strategic imperative. Companies that opt to build every data layer internally often spend many months stabilizing their pipelines before they can even launch initial features. By the time their internal infrastructure is deemed production-ready, the market may have already shifted significantly.

In contrast, companies that strategically integrate mature data infrastructure from specialized providers gain a distinct advantage. They can:

- Launch Features Faster: Reduce time-to-market by leveraging pre-existing, reliable data.

- Respond Quickly to Market Changes: Adapt to evolving market conditions and regulatory shifts more agilely.

- Iterate Based on Real-Time Data: Continuously refine product offerings and strategies using the most current information.

This compounding effect of speed leads to earlier feedback loops, accelerated revenue generation, and a stronger brand positioning in the market. In the highly competitive PropTech landscape, the difference between industry leadership and lagging behind is often measured not in the novelty of ideas, but in the velocity of execution.

The Modern Scaling Model: Builders vs. Orchestrators

As the PropTech sector matures, a distinct pattern in scaling methodologies is becoming increasingly evident. Companies tend to align with one of two primary models: "Builders" or "Orchestrators."

Builders adopt a philosophy of attempting to own and control every layer of their technology stack. They meticulously ingest listings, normalize property data, model rental performance, calculate ROI, and manage all update cycles internally. While this approach offers a high degree of control, it often results in significant infrastructure drag. This drag manifests as ongoing maintenance burdens, the accumulation of technical debt, and a slower overall velocity for feature development.

Orchestrators, conversely, embrace a more integrated approach. They strategically partner with best-in-class data providers for foundational datasets, thereby freeing up their internal resources. These resources are then concentrated on developing differentiators such as user experience, advanced automation capabilities, proprietary AI logic, and innovative workflow enhancements.

Builders often compete on the perceived completeness of their internal capabilities. Orchestrators, on the other hand, compete on speed, focus, and the intelligent application of external resources. In today’s increasingly data-dense markets, true differentiation rarely stems from the act of rebuilding commodity infrastructure. Instead, it arises from the intelligence and innovation applied to leveraging that infrastructure. The PropTech companies achieving the most efficient and sustainable scaling today predominantly resemble orchestrators. They strategically leverage partnerships to accelerate their progress and build more intelligently.

What an Infrastructure-Level Data Partnership Looks Like in Practice

In practical terms, a strategic data partnership entails integrating a unified, production-ready real estate API rather than attempting to build fragmented data pipelines internally. Infrastructure-grade solutions, such as advanced APIs offered by specialized data providers, consolidate property data, MLS-style listings, short-term rental performance metrics, long-term rental estimates, and investment analytics into structured REST endpoints meticulously designed for PropTech applications.

Instead of expending significant effort stitching together disparate data sources like scraped calendar data, information from various rental platforms, and public records, PropTech teams can directly retrieve clean, machine-readable JSON data. This data typically includes crucial metrics such as occupancy rates, ADR, revenue, RevPAR, rental comparables, built-in ROI calculations, and even historical performance data spanning 36 months, all refreshed daily and often available across all 50 U.S. states.

This integrated approach effectively removes the considerable burden of multi-source aggregation, normalization, and ongoing maintenance. It liberates engineering teams to concentrate on developing core product functionalities like workflow optimization, advanced automation, sophisticated AI models, and superior user experiences, all while relying on a validated, continuously updated data infrastructure operating reliably beneath the surface. This is the tangible outcome of a strategic data partnership in execution.

Conclusion: The Evolving PropTech Playbook

In an industry as fundamentally data-driven as real estate, the notion of control can often be perceived as a significant strength. However, in practice, leverage often proves to be a more potent force for scalable growth. Strategic data partnerships empower PropTech companies to achieve rapid scaling without overextending their engineering teams or unnecessarily delaying critical product innovation.

By integrating mature, structured datasets that encompass everything from core property intelligence to detailed rental performance and sophisticated investment analytics, companies can strategically refocus their internal efforts on what truly differentiates them: the ingenuity of their workflows, the sophistication of their automation, the intelligence of their AI applications, and the overall user experience they deliver.

The decision to build or partner is no longer solely a technical consideration; it is a deeply strategic one. Founders must move beyond simply asking if they can build a particular capability internally and instead focus on whether building it moves them closer to their core competitive advantage. The PropTech companies experiencing the most rapid growth in today’s dynamic landscape are not necessarily those that collect the most raw data. Instead, they are the organizations that expertly connect the right data, through the most effective infrastructure, at the optimal speed. This represents the modern playbook for achieving sustained growth and market leadership.

Who Benefits Most from Strategic Data Partnerships

This approach to data infrastructure is particularly well-suited for PropTech teams that:

- Prioritize Speed to Market: Companies needing to launch products or features quickly to capture market share or respond to competitive pressures.

- Focus on Core Differentiation: Ventures whose primary value proposition lies in proprietary algorithms, unique user experiences, or specialized workflow automation, rather than data aggregation itself.

- Operate with Lean Engineering Teams: Startups or growth-stage companies that need to maximize the impact of their limited engineering resources.

- Require Broad Geographic Coverage: Businesses that need reliable data across multiple markets but lack the resources to build and maintain disparate regional data pipelines.

- Seek Predictable Data Costs: Companies aiming for transparent and scalable data costs that align with their growth trajectory.

This model may not be the optimal choice for companies whose core business is inherently data licensing or for those actively engaged in building proprietary, unique datasets as their principal differentiator.

Navigating the Build vs. Buy Decision for Your Data Stack

For PropTech firms currently evaluating whether to invest in building their own real estate data infrastructure or integrating with an established API partner, a thorough architectural and roadmap review is essential. Engaging with experts who can pressure-test these decisions can provide invaluable clarity.

Initiating a conversation with data solution providers can facilitate a deep dive into specific use cases, technical requirements, and ambitious scaling goals, helping to chart the most effective path forward.