The real estate technology sector, or PropTech, is inherently built upon a bedrock of data. Crucial information such as property listings, comparable sales, rental rates, occupancy trends, and investment returns forms the very foundation of every product and service offered. Consequently, when PropTech companies embark on scaling their operations, the immediate and often instinctive approach is to develop robust internal data pipelines, striving to own the entire technology stack and exert complete control over data inputs. While this instinct to build internally might initially appear strategic, promising defensibility and long-term advantage, the practical realities of the PropTech landscape are increasingly revealing a different path to efficient growth and competitive edge.

In the early stages of a PropTech venture, the allure of internal data infrastructure development is understandable. Founders may perceive owning the data pipeline as a key differentiator, a way to ensure data quality and proprietary access that competitors cannot easily replicate. This approach aligns with a traditional mindset of vertical integration, where control over critical components is seen as a safeguard against external dependencies and a driver of innovation. The belief is that by meticulously constructing every piece of the data puzzle, from scraping raw information to cleaning, normalizing, and structuring it for product use, a company can build a truly unique and powerful asset.

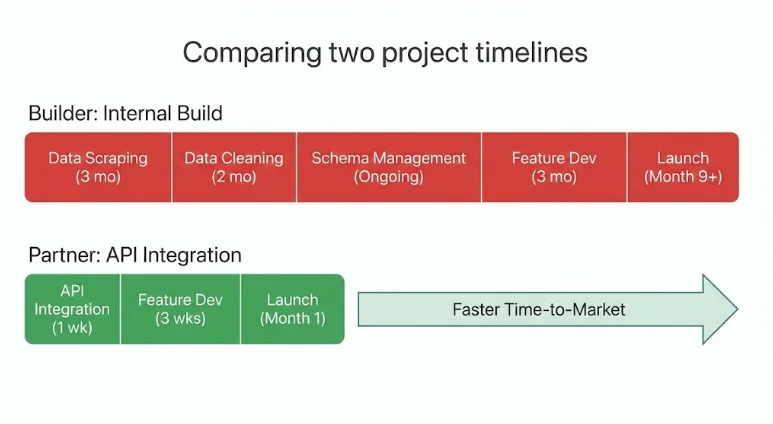

However, for many PropTech teams, this foundational instinct often leads to unforeseen challenges and significant resource drains. The process of building internal data pipelines is notoriously time-consuming, frequently consuming months of valuable engineering effort before any tangible product value is delivered. The inherent fragility of web scraping technologies means that these pipelines are constantly susceptible to breaking due to website structure changes or platform updates. Data schemas, which are the organizational frameworks for data, can shift unexpectedly as sources evolve. Furthermore, integrating and maintaining multi-source datasets requires continuous, intensive efforts in data cleaning and normalization to ensure consistency and accuracy. This can lead to product roadmaps being significantly slowed down as engineering teams are diverted from core product development to the quiet, yet demanding, expansion of data infrastructure.

In parallel, competitors who have embraced more mature and streamlined data integration strategies are often able to ship product features and innovations at a considerably faster pace. This disparity in speed can create a significant competitive disadvantage, allowing rivals to capture market share, onboard users, and iterate on their offerings while the "builder" companies are still wrestling with foundational data challenges. The PropTech companies that are achieving the most efficient scaling today are not necessarily the ones building every dataset from scratch. Instead, they are making deliberate, strategic decisions about which aspects of their business truly define their unique value proposition and are actively choosing to partner for the rest of their data needs. In the contemporary PropTech ecosystem, the real strategic advantage is shifting from absolute control to intelligent leverage.

What is a Strategic Data Partnership in PropTech (and Why It Matters)?

A strategic data partnership in PropTech is defined as a long-term integration with a specialized external data provider. This collaboration facilitates the delivery of production-ready real estate data through application programming interfaces (APIs), thereby bypassing the need for companies to build and maintain their own internal data pipelines. Instead of dedicating substantial resources to the arduous tasks of scraping, cleaning, and updating fragmented data sources, companies can seamlessly integrate pre-structured datasets that are continuously refreshed and immediately ready for product integration. This fundamental shift allows PropTech firms to treat data as a utility or infrastructure component, rather than as a core element of their product differentiation.

In practical terms, these strategic partnerships offer a multitude of benefits to PropTech teams, enabling them to:

- Accelerate Product Development: By accessing ready-to-use data, engineering teams can bypass months of infrastructure development and focus directly on building and refining product features that directly impact user experience and business value.

- Reduce Engineering Costs and Burn Rate: The significant investment in hiring and retaining specialized data engineering talent, along with the ongoing operational costs of maintaining complex data pipelines, can be substantially reduced. This capital can then be reallocated to more strategic areas of growth.

- Enhance Data Quality and Reliability: Specialized data providers invest heavily in data acquisition, validation, and quality control processes, often employing advanced techniques and extensive resources that individual PropTech companies may struggle to match. This leads to more accurate and dependable data for downstream applications.

- Improve Scalability and Coverage: Partnering with providers who already possess broad geographic coverage and robust data aggregation capabilities allows PropTech companies to scale their operations more rapidly and expand into new markets without the significant overhead of building new data infrastructure for each region.

- Focus on Core Competencies: By outsourcing the complexities of data acquisition and management, PropTech companies can concentrate their internal resources and talent on their unique intellectual property, such as proprietary algorithms, innovative user interfaces, and specialized workflow automation.

It is crucial to understand that a strategic data partnership is not about outsourcing the core product or the intellectual property that defines a company. Instead, it is about strategically outsourcing the acquisition and management of commodity infrastructure – the foundational data that is essential but not inherently differentiating. This allows companies to double down on what truly sets them apart and provides a unique competitive advantage in the marketplace.

The Strategic Decision: Build vs. Partner

Every PropTech founder eventually confronts a pivotal decision: should they build a specific data capability internally or should they seek a strategic partnership for it? This choice has profound implications, shaping not only the technical architecture of the product but also significantly influencing the company’s speed to market, its burn rate, and its long-term scalability.

When to Build

Building a data capability internally is generally the more appropriate strategy when that capability fundamentally defines the company’s core differentiation. If a particular feature or data set is central to the company’s unique value proposition, then owning and controlling the logic behind it can strengthen its defensibility against competitors. This rationale strongly applies to proprietary underwriting algorithms, unique scoring models that leverage proprietary data relationships, or highly specialized workflow automation tools that are difficult or impossible for others to replicate.

Internal builds are strategically justified when they:

- Represent a Core Competitive Differentiator: The capability is not just a feature, but the very essence of what makes the company unique and valuable.

- Involve Proprietary Data or Methodologies: The company is developing or utilizing data sources or analytical methods that are not readily available elsewhere and are critical to its competitive advantage.

- Require Deep Domain Expertise and Control: The nuances of the data or the logic applied to it are so specific and critical that only in-house expertise can adequately manage them.

- Create a Significant Barrier to Entry for Competitors: Owning the data infrastructure or the processes around it creates a substantial hurdle for new entrants or existing players to overcome.

In these specific cases, the feature or data capability is, in essence, the company itself, and therefore, maintaining complete control is paramount.

When to Partner

Partnerships, conversely, become a more strategic and advantageous option when the data layer, while foundational and essential, is not the primary source of differentiation. Real estate datasets, by their very nature, require continuous aggregation from numerous sources, meticulous cleaning, rigorous validation, and daily updates to remain relevant and accurate. Attempting to reconstruct and maintain this complex infrastructure internally often leads to significant delays in core product development and innovation.

Partnering becomes a strategic imperative when:

- The Data is Foundational but Not Differentiating: The data is necessary for the product to function but does not represent the core intellectual property or unique selling proposition.

- Scalability and Coverage are Paramount: The business requires access to broad geographic data coverage or a wide array of data types that would be prohibitively expensive and time-consuming to build independently.

- Speed to Market is Critical: The company needs to launch or iterate on its product rapidly to capture market opportunities or respond to competitive pressures.

- Resource Constraints Exist: The company has limited engineering bandwidth or capital that can be more effectively deployed on product development and customer acquisition rather than infrastructure maintenance.

- Data Updates are Constant and Demanding: The data requires daily or even more frequent refreshes, representing a significant ongoing operational burden.

In these scenarios, integrating with a specialized data provider is not merely a shortcut; it represents a strategic allocation of resources. The most effective PropTech teams are those that judiciously build what makes them distinctly different and integrate what makes them powerfully scalable.

Partnerships as a Capital Allocation Strategy

Strategic data partnerships should not be viewed as mere technical shortcuts but rather as sophisticated capital allocation decisions. In the early and growth stages of PropTech companies, engineering time is arguably the most valuable and expensive resource. Every month that an engineering team dedicates to building and maintaining internal data pipelines is a month that could have been spent enhancing the product experience, developing critical automation features, or solidifying the company’s core differentiation.

The endeavor of reconstructing comprehensive nationwide real estate data infrastructure internally is a monumental task. It involves aggregating millions of MLS records, harmonizing diverse property attributes across different jurisdictions, modeling rental income based on various market factors, cleaning noisy short-term rental signals, validating complex occupancy trends, and consistently calculating return on investment (ROI) metrics. This work is not only technically complex but also ongoing and largely invisible to the end users of the PropTech product.

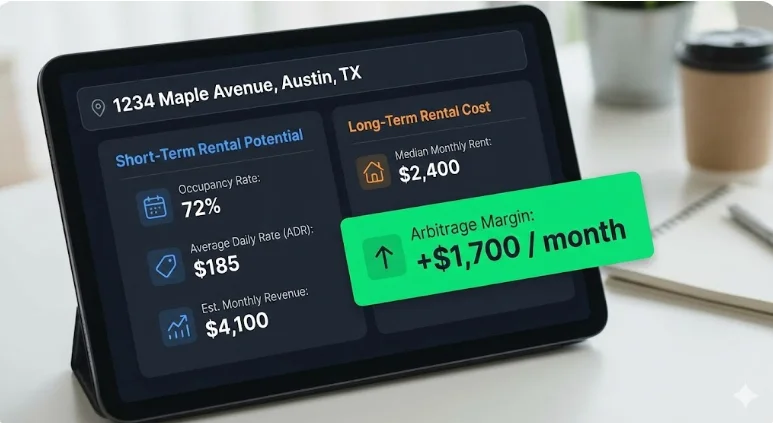

When companies choose to integrate mature real estate APIs instead, they gain immediate access to a wealth of meticulously curated and structured data. This typically includes:

- Comprehensive Property Data: Detailed information on millions of properties, including attributes, sales history, and ownership records.

- MLS-Style Listings: Accurate and up-to-date listing data, crucial for marketplaces and valuation tools.

- Short-Term Rental (STR) Performance Metrics: Key indicators such as occupancy rates, Average Daily Rate (ADR), and Revenue Per Available Room (RevPAR), essential for short-term rental investors and operators.

- Long-Term Rental (LTR) Estimates: Reliable projections for rental income on traditional long-term leases, vital for diversified real estate investment strategies.

- Pre-Calculated Investment Analytics: Built-in metrics like ROI, cash-on-cash return, and cap rates, saving significant analytical effort.

- Neighborhood and Market Benchmarks: Data on local market trends, comparable sales, and rental rates to inform investment decisions.

All of this data is delivered through structured, machine-readable endpoints, typically in JSON format, designed specifically for seamless integration into PropTech applications. The result of this approach is immediate acceleration. Teams can significantly reduce their infrastructure overhead, preserve valuable engineering focus on high-impact initiatives, and move much faster toward developing and deploying revenue-generating features. For venture-backed PropTech companies in particular, strategic data partnerships are not about outsourcing capability; they are a deliberate strategy to direct precious capital towards innovation and allow specialized data providers to handle the heavy lifting of data infrastructure.

Applied Examples Across PropTech Verticals

The strategic advantage of embracing data partnerships becomes even more apparent when examined across various PropTech categories. While business models and specific challenges may differ, the underlying scaling challenge often remains the same: transforming fragmented and often unreliable real estate data into dependable, production-ready intelligence that fuels product innovation and customer value.

Marketplaces

- Challenge: Property marketplaces rely heavily on accurate and comprehensive listings, up-to-date pricing history, granular neighborhood benchmarks, up-to-the-minute rental comparables, and insightful investment indicators across numerous cities and regions.

- Risk of Building: In-house ingestion and normalization of MLS data, property attribute harmonization, and the development of performance modeling capabilities require constant updates and multi-source validation. Expanding coverage market by market becomes a significant bottleneck, slowing growth and introducing data inconsistencies.

- Strategic Partnership: By integrating structured property endpoints, powerful search APIs, and robust neighborhood analytics that offer nationwide coverage, marketplaces can circumvent the need to build and maintain infrastructure that does not directly differentiate their core offering.

- Scaling Outcome: Engineering resources can be laser-focused on enhancing liquidity, optimizing user experience, and streamlining transaction workflows – the true drivers of marketplace value.

CRMs & Deal Management Platforms

- Challenge: Users of CRM platforms frequently need to exit the system to validate financial assumptions or gather property-specific data, leading to a fragmented workflow and reduced user stickiness.

- Risk of Building: Developing an in-house underwriting layer involves merging disparate property data, short-term rental metrics, long-term rental estimates, historical performance arrays, and complex ROI calculations. Each of these datasets requires ongoing cleaning and daily updates.

- Strategic Partnership: Embedding unified real estate APIs directly within the CRM platform allows for immediate deal validation and data enrichment to occur seamlessly within the user’s existing workflow.

- Scaling Outcome: The time to decision-making is dramatically reduced. User retention improves as the platform becomes an indispensable tool for critical financial analysis. The CRM evolves from a mere workflow tool into a powerful decision-making engine.

AI Underwriting & Analytics Tools

- Challenge: Machine learning systems, particularly in underwriting and predictive analytics, are highly dependent on structured, time-series datasets that possess consistent schemas and reliably accurate inputs.

- Risk of Building: Data obtained through web scraping often lacks proper normalization, sufficient sample size validation, or robust fallback logic. Inconsistent or incomplete inputs inevitably lead to unstable and unreliable model outputs, hindering the development of accurate AI solutions.

- Strategic Partnership: Integrating APIs that consistently deliver 36 months of monthly performance data, pre-built investment metrics, and statistical confidence indicators is crucial for enabling cleaner model training and accelerating the deployment of AI-powered tools.

- Scaling Outcome: AI tools can transition from prototype to production environments much more rapidly, with significantly reduced modeling risk and a higher probability of delivering accurate and actionable insights.

Investment & Portfolio Platforms

- Challenge: Institutional-grade underwriting demands access to critical metrics such as occupancy rates, Average Daily Rate (ADR), Revenue Per Available Room (RevPAR), detailed rental comps, sophisticated expense modeling, and accurate ROI projections across multiple markets. Furthermore, identifying highly profitable rental arbitrage opportunities requires a simultaneous evaluation of both short-term and long-term rental potential.

- Risk of Building: Reconstructing nationwide data coverage with daily refresh cycles is exceptionally capital-intensive and inherently slow. Furthermore, building separate data pipelines for short-term and long-term rental data effectively doubles the infrastructure burden and complexity.

- Strategic Partnership: Leveraging harmonized datasets with unified schemas and pre-modeled financial indicators eliminates the significant drag of building and maintaining complex data infrastructure. Partnering for an API that provides access to both STR and LTR data unlocks immediate arbitrage analysis capabilities without the need for managing multiple, disparate data subscriptions or building complex integration layers.

- Scaling Outcome: Platforms can deliver sophisticated institutional-grade analytics and deep arbitrage modeling capabilities without incurring the prohibitive overhead associated with building such infrastructure internally, thereby accelerating expansion across diverse markets.

The Competitive Advantage of Speed

In the dynamic and often volatile real estate markets, time is a critical factor. Real estate markets do not wait for product roadmaps to catch up. Short-term rental revenue, for instance, can fluctuate dramatically, often by 20% to 50% between peak and off-peak seasons. Supply levels are constantly shifting as new listings enter a market. Regulatory environments can evolve rapidly, impacting operational feasibility and profitability. Interest rates, a key driver of investment decisions, can change underwriting assumptions almost overnight.

In this environment, speed is not a mere cosmetic advantage; it is a fundamental strategic imperative. Companies that choose to build every data layer internally often find themselves spending months stabilizing complex pipelines before they can even launch their initial features. By the time their internal infrastructure is robust and reliable, the market dynamics may have already shifted significantly, diminishing the impact of their delayed entry.

In contrast, companies that strategically integrate mature data infrastructure gain a decisive edge. They can:

- Launch Products and Features Faster: By leveraging pre-built data access, development cycles are significantly shortened, allowing for quicker market entry and iteration.

- Respond Rapidly to Market Changes: Access to real-time or near real-time data enables companies to adapt their strategies and offerings swiftly to evolving market conditions.

- Iterate Based on Real User Feedback: Shipping earlier allows for the collection of crucial user feedback, enabling product teams to refine their offerings and ensure they meet market demands.

- Gain Market Share and Brand Recognition: Faster execution translates to increased visibility, stronger brand positioning, and a greater ability to capture market share before competitors.

This compounding advantage of speed accelerates the entire growth cycle. Shipping earlier leads to faster feedback loops, quicker revenue generation, and a more robust brand positioning. In increasingly competitive PropTech categories, the difference between leading and lagging is often measured not by the brilliance of an idea, but by the velocity of its execution.

The Modern Scaling Model: Builders vs. Orchestrators

As the PropTech industry matures, a clear pattern in scaling strategies is emerging. Companies tend to gravitate towards one of two primary models: "Builders" or "Orchestrators."

Builders typically attempt to own and control every layer of their technology stack. They invest heavily in ingesting raw listings, normalizing property data, modeling rental performance, calculating ROI, and meticulously maintaining their own update cycles internally. While this approach offers a high degree of control and can foster deep internal expertise, it also inherently creates significant infrastructure drag. This includes the ongoing burden of maintenance, the accumulation of technical debt, and a naturally slower pace of feature velocity due to the overhead of managing complex internal systems.

Orchestrators, on the other hand, adopt a different philosophy. They strategically integrate best-in-class data providers for foundational datasets, treating these as essential infrastructure components. Their internal resources are then concentrated on developing and refining the aspects of their business that truly drive differentiation, such as superior user experience, advanced automation capabilities, proprietary AI logic, and innovative workflow solutions.

Builders often compete primarily on the completeness of their internally managed data. Orchestrators, however, compete on speed, agility, and the intelligent application of data. In today’s increasingly data-dense markets, true differentiation rarely stems from the act of rebuilding commodity infrastructure. Instead, it emerges from how intelligently and effectively that foundational infrastructure is leveraged and applied to solve user problems. The PropTech companies that are scaling most efficiently and effectively today largely resemble orchestrators. They strategically leverage partnerships to move faster, build smarter, and ultimately deliver greater value to their customers.

What an Infrastructure-Level Data Partnership Looks Like in Practice

In practical terms, a strategic data partnership translates to integrating a unified, production-ready real estate API rather than attempting to build and manage fragmented, often unreliable, internal data pipelines. Infrastructure-grade solutions, such as APIs that consolidate property data, MLS-style listings, short-term rental performance metrics, long-term rental estimates, and detailed investment analytics into structured REST endpoints, are specifically designed for the unique demands of PropTech applications.

Rather than expending considerable effort stitching together disparate sources like scraped calendar data, fragmented rental platforms, and publicly available records, PropTech teams can leverage these APIs to retrieve clean, machine-readable JSON data. This data often includes comprehensive metrics such as occupancy rates, ADR, revenue, RevPAR, detailed rental comparables, built-in ROI calculations, and even historical performance arrays stretching back 36 months, all refreshed daily and covering all 50 U.S. states.

This integrated approach effectively removes the significant burden of multi-source aggregation, complex normalization processes, and continuous maintenance. It liberates engineering teams to focus their expertise on critical areas like workflow optimization, advanced automation, sophisticated AI models, and enhancing the overall user experience. All of this is achieved while relying on validated, continuously updated, and robust data infrastructure operating seamlessly in the background. This is the practical manifestation of a strategic data partnership in the modern PropTech landscape.

Conclusion: The New PropTech Playbook

In an industry as data-driven as real estate, the initial instinct towards control over data pipelines can feel like a source of strength. However, the evolving PropTech landscape increasingly demonstrates that leverage, achieved through strategic partnerships, is a far more potent driver of growth and competitive advantage.

Strategic data partnerships empower PropTech companies to scale effectively without overextending their engineering teams or inadvertently delaying critical product innovation. By integrating mature, structured datasets that encompass everything from fundamental property intelligence to detailed rental performance and sophisticated investment analytics, companies can strategically redirect their internal resources towards what truly differentiates them: enhancing workflow efficiency, developing advanced automation, building sophisticated AI capabilities, and perfecting the user experience.

The decision to build or partner is no longer a purely technical one; it is a profound strategic choice. Founders must critically assess not just whether they can build a certain capability internally, but more importantly, whether building it moves them closer to their core competitive advantage and accelerates their path to market leadership.

The PropTech companies that are experiencing the fastest and most sustainable growth in today’s competitive environment are not necessarily those that possess the largest volumes of raw data. Instead, they are the ones that excel at connecting the right data, through the most efficient and reliable infrastructure, at the optimal speed. This intelligent integration and strategic leverage represent the new playbook for scalable growth in the PropTech sector.

Who Strategic Data Partnerships Are Best For

This strategic approach to data infrastructure is particularly well-suited for PropTech teams that:

- Are prioritizing rapid product development and time-to-market.

- Need to access broad geographic data coverage without the significant investment in building it.

- Have limited engineering resources that can be better utilized on core product innovation.

- Operate in a competitive market where speed and agility are crucial for success.

- Require accurate and up-to-date data for analytics, underwriting, or marketplace functionality.

This model may not be the ideal fit for companies whose primary business model revolves around data licensing itself, or for those that are actively building proprietary datasets as their principal differentiator. However, for the vast majority of PropTech ventures focused on leveraging data to solve specific real estate problems and enhance user experiences, strategic data partnerships offer a clear and compelling path to accelerated growth and sustained competitive advantage.

Exploring Build vs. Buy for Your Data Stack?

If your PropTech company is currently evaluating the complex decision of whether to build your own real estate data infrastructure or integrate with a specialized API partner, we invite you to engage in a detailed discussion. Our team is equipped to help you pressure-test your architectural decisions, refine your product roadmap, and ensure your data strategy aligns with your long-term scaling goals.

Book a brief introductory call with our data solutions team to explore your specific use case, discuss your technical requirements, and gain insights into how a strategic data partnership can accelerate your growth objectives.