PropTech companies are intrinsically data-dependent, with their entire operational framework built upon the bedrock of listings, comparable sales, rental rates, occupancy trends, and investment returns. Every feature, every innovation, hinges on the availability of structured and reliable information. Consequently, when founders embark on scaling their ventures, the initial instinct is almost universally to construct their data pipelines internally, aiming to own the entire technology stack and meticulously control all input sources. This approach, at first glance, appears strategically sound, with the concept of control inherently suggesting defensibility and the creation of a long-term competitive advantage.

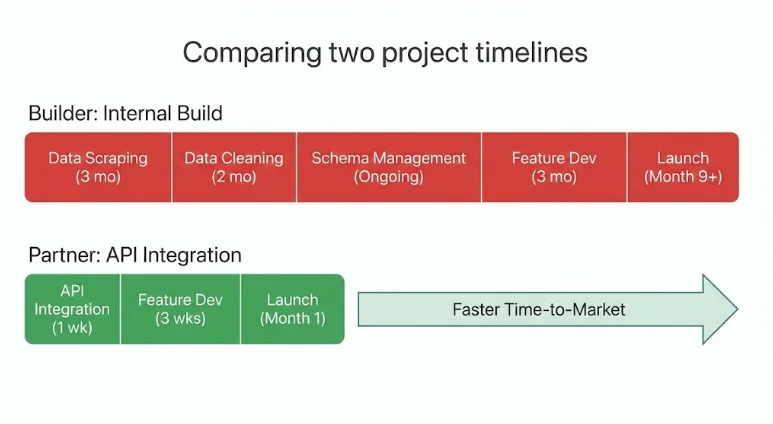

However, the practical realities of this "build-it-yourself" ethos often diverge significantly from initial expectations. Many PropTech teams discover that dedicating months of valuable engineering time to developing internal data infrastructure yields little visible return in the short term. The complexities of web scraping, where external website structures and data formats are in constant flux, lead to frequent breakage. Schema shifts across disparate data sources necessitate continuous, labor-intensive cleaning and normalization processes. As a result, core product roadmaps often stall, with engineering resources disproportionately allocated to maintaining and expanding the underlying infrastructure rather than directly enhancing customer-facing features.

In stark contrast, competitors who have strategically integrated mature, externally managed data systems are able to ship new products and features with significantly greater speed and agility. The PropTech companies that are currently achieving the most efficient and rapid scaling are not those attempting to build every single dataset from scratch. Instead, they are making deliberate, strategic decisions about which capabilities truly define their unique value proposition and differentiate them in the market, while judiciously partnering with specialized providers for the rest. In the contemporary PropTech landscape, the ultimate advantage is not absolute control over data, but rather the strategic leverage derived from accessing and utilizing it effectively.

Understanding the Strategic Data Partnership in PropTech

A strategic data partnership in the PropTech sector is defined as a long-term integration with a specialized, third-party data provider. This partnership delivers production-ready real estate data directly through robust Application Programming Interfaces (APIs), thereby eliminating the need for companies to build and maintain their own complex internal data pipelines. Instead of dedicating extensive resources to scraping, cleaning, validating, and updating fragmented data from numerous sources, teams can integrate pre-structured, continuously refreshed datasets that are immediately ready for product integration and use. This paradigm shift allows companies to treat essential data as a utility or infrastructure component, rather than as a core element of their differentiation strategy.

In practice, these strategic partnerships offer PropTech teams several tangible benefits:

- Accelerated Time-to-Market: By bypassing the lengthy development cycles of internal data infrastructure, companies can launch new features and products much faster.

- Reduced Engineering Overhead: Valuable engineering talent can be redirected from the non-differentiating task of data pipeline maintenance to core product innovation.

- Enhanced Data Quality and Reliability: Specialized data providers invest heavily in data acquisition, cleaning, validation, and ongoing maintenance, ensuring higher quality and more consistent data than most internal teams can achieve.

- Scalability and Coverage: Partners often provide comprehensive datasets covering vast geographical areas, enabling rapid expansion without the need to build out regional data collection capabilities.

- Access to Specialized Expertise: Companies benefit from the deep domain knowledge and technical expertise of data providers who focus solely on real estate data.

Crucially, engaging in a strategic data partnership is not an abdication of product ownership. It represents a strategic decision to outsource the management of commodity infrastructure, thereby enabling internal teams to redouble their efforts on developing and refining the unique aspects of their product that truly set them apart.

The Critical Decision: Build vs. Partner

Every PropTech founder, at some juncture, confronts a fundamental strategic question: should a particular capability be developed internally, or should it be sourced through a partnership? The answer to this question carries profound implications, shaping not only the product’s architecture but also its speed of development, the company’s burn rate, and its long-term scalability.

When Building Internally Makes Sense

Developing data capabilities in-house is most judicious when that specific capability is central to the company’s core differentiation and value proposition. If a feature or data type is intrinsically linked to what makes the company unique, then owning and controlling that logic strengthens its defensibility against competitors. This rationale applies most strongly to proprietary underwriting algorithms, unique scoring models, or specialized workflow automation tools that are difficult or impossible for rivals to replicate.

Internal builds are typically justified when they:

- Form the core intellectual property: The capability is the company’s unique selling proposition.

- Require deep customization beyond off-the-shelf solutions: Standard data sources do not meet specific, highly niche requirements.

- Involve sensitive or proprietary data generation: The data itself is a key output of the company’s unique processes.

- Provide a sustainable competitive moat: The ongoing investment in building and maintaining the capability creates a barrier to entry for others.

In these instances, the feature or data capability is, in essence, the company itself, making internal ownership a strategic necessity.

When Partnering Becomes the Strategic Choice

Partnerships, conversely, offer a more strategic advantage when the data layer, while foundational, is not the primary source of differentiation. Real estate datasets, by their very nature, demand continuous aggregation from numerous sources, rigorous cleaning, extensive validation, and daily updates to remain relevant. Attempting to reconstruct and maintain this complex infrastructure internally often significantly delays the development and deployment of core product innovations.

Partnering becomes a strategically sound decision when:

- The data is a necessary utility, not a core differentiator: The data is essential for functionality but doesn’t define the company’s unique value.

- The cost and complexity of internal build outweigh the benefits: The resources required to build and maintain the data infrastructure are prohibitive or divert focus from core competencies.

- Rapid scaling and market entry are paramount: Partnerships allow for immediate access to data, facilitating faster market penetration.

- Access to broader, more comprehensive datasets is needed: Specialized providers often offer data coverage that would be exceptionally difficult and expensive to replicate internally.

- The focus needs to remain on product development and user experience: Freeing up engineering resources allows for greater investment in customer-facing innovation.

In these scenarios, integration is not a mere shortcut; it represents a deliberate and strategic allocation of limited company resources toward areas that drive genuine competitive advantage. The most effective PropTech companies today are those that excel at building what makes them distinct and integrating what enables them to scale efficiently.

Partnerships as a Strategic Capital Allocation Decision

Strategic data partnerships are not simply technical workarounds; they are fundamentally capital allocation decisions. Particularly for early-stage and growth-stage PropTech companies, engineering time represents the most valuable and often the most constrained resource. Every month spent constructing and maintaining internal data pipelines is a month that could have been dedicated to enhancing product experience, developing advanced automation, or solidifying core differentiation.

The process of reconstructing comprehensive nationwide real estate data infrastructure internally is a Herculean task. It involves the aggregation of MLS records, the harmonization of disparate property attributes, the modeling of rental income across diverse markets, the meticulous cleaning of short-term rental signals, the validation of occupancy trends, and the continuous calculation of investment return metrics. This work is inherently complex, demanding constant attention and is largely invisible to the end-user, offering little direct value in terms of product perception or immediate revenue generation.

When companies instead opt to integrate mature real estate APIs from specialized providers, they gain immediate access to a wealth of curated and structured data. This typically includes:

- Comprehensive Property Listings: Access to up-to-date information on properties for sale and rent.

- Detailed Property Attributes: Standardized data on features, square footage, year built, and other key characteristics.

- Historical Sales and Rental Data: Insights into past market activity and pricing trends.

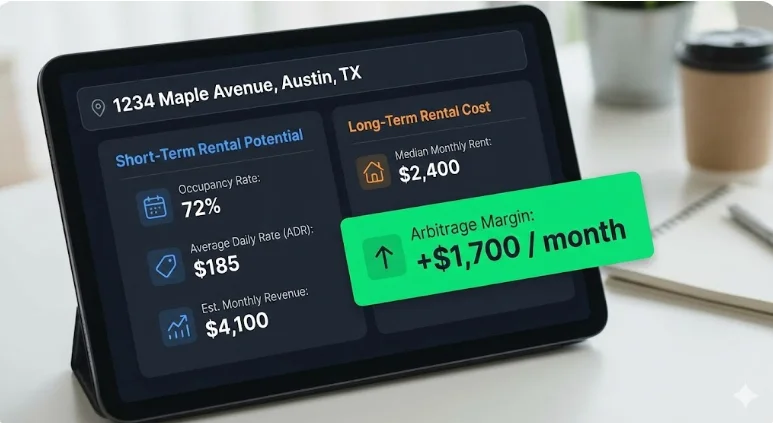

- Short-Term Rental Performance Metrics: Data such as Average Daily Rate (ADR), Occupancy, Revenue Per Available Room (RevPAR), and gross rental income.

- Long-Term Rental Comps and Estimates: Benchmarks for traditional rental income potential.

- Investment Analytics: Pre-calculated metrics like Cap Rate, Cash-on-Cash Return, and internal rates of return (IRR).

- Neighborhood Data and Demographics: Contextual information about specific locations.

All of this critical information is delivered through structured, machine-readable endpoints, making integration seamless and efficient. The result is an immediate acceleration of development cycles. Engineering teams reduce their infrastructure overhead, preserve their focus on core product innovation, and move more swiftly towards developing revenue-generating features. For venture-backed PropTech companies, in particular, these partnerships are not about outsourcing core capabilities but about strategically directing capital towards innovation while allowing specialized data providers to manage the intricate and ongoing demands of data infrastructure.

Applied Examples Across PropTech Verticals

The strategic value of data partnerships becomes particularly evident when examining their application across various PropTech categories. While the specific business models may differ, the underlying challenge of transforming fragmented real estate data into reliable, production-ready intelligence remains a common thread.

Marketplaces

-

Challenge: Property marketplaces require accurate listings, real-time pricing history, robust neighborhood benchmarks, reliable rental comparables, and actionable investment indicators across a multitude of cities and regions.

-

Risk of Building Internally: Developing in-house capabilities for ingesting and normalizing MLS data, harmonizing property attributes, and building sophisticated performance models necessitates constant updates and multi-source validation. Expanding geographical coverage market by market can severely slow growth and introduce inconsistencies in data quality.

-

Strategic Partnership: By integrating structured property data endpoints, sophisticated search APIs, and comprehensive neighborhood analytics with nationwide coverage, marketplaces can bypass the costly and time-consuming task of building non-differentiating infrastructure.

-

Scaling Outcome: Engineering resources can be redirected towards enhancing liquidity, improving user experience, and optimizing transaction workflows—the true drivers of marketplace value.

-

Case Study: Rapid Deployment for STR Underwriting

A burgeoning PropTech startup focused on developing a Short-Term Rental (STR) underwriting tool leveraged an existing real estate API to deploy production-grade analytics in under two weeks. Rather than dedicating months to normalizing listings, rental performance data, and ROI metrics, the team concentrated its efforts on refining the user interface, streamlining workflows, and enhancing the deal evaluation logic. This agility allowed them to rapidly test product-market fit, onboard early adopters, and iterate quickly, all without the immediate need to hire a dedicated data engineering team.

CRMs & Deal Management Platforms

- Challenge: Users often exit CRM platforms to validate financial assumptions or conduct due diligence elsewhere, leading to fragmented workflows, reduced user engagement, and decreased platform stickiness.

- Risk of Building: Developing in-house underwriting layers requires merging diverse datasets, including property-specific data, STR metrics, long-term rental estimates, historical performance arrays, and detailed ROI calculations. Each of these datasets demands meticulous cleaning and daily updates.

- Strategic Partnership: Embedding unified real estate APIs directly within the CRM platform allows for seamless deal validation to occur within the user’s existing workflow.

- Scaling Outcome: The time required for decision-making is significantly reduced, user retention improves, and the CRM platform evolves from a mere workflow management tool into a powerful decision-making engine.

AI Underwriting & Analytics Tools

- Challenge: Machine learning systems are inherently dependent on structured, time-series datasets characterized by consistent schemas and reliable input variables.

- Risk of Building: Data obtained through web scraping often lacks proper normalization, sufficient sample size validation, or robust fallback logic. Inconsistent or unreliable inputs inevitably lead to unstable and inaccurate model outputs.

- Strategic Partnership: Integrating APIs that deliver structured datasets, such as 36 months of monthly performance data, pre-built investment metrics, and statistical confidence indicators, enables cleaner model training and significantly faster deployment cycles.

- Scaling Outcome: AI-powered tools can transition from prototype to production environments more rapidly, with a substantially reduced risk of modeling errors and inaccuracies.

Investment & Portfolio Platforms

- Challenge: Institutional-grade underwriting demands comprehensive data on occupancy rates, Average Daily Rates (ADR), Revenue Per Available Room (RevPAR), detailed rental comparables, sophisticated expense modeling, and accurate ROI projections across multiple markets. Furthermore, identifying high-yield rental arbitrage opportunities necessitates the concurrent evaluation of both short-term and long-term rental potential.

- Risk of Building: Replicating nationwide data coverage with daily refresh cycles is an exceptionally capital-intensive and time-consuming endeavor. Developing separate data pipelines for STR and LTR data effectively doubles the infrastructure burden.

- Strategic Partnership: Leveraging harmonized datasets with unified schemas and pre-modeled financial indicators effectively eliminates this infrastructure drag. Partnering for an API that provides both STR and LTR data unlocks immediate arbitrage analysis capabilities without the need for managing multiple, competing data subscriptions and complex integrations.

- Scaling Outcome: Investment platforms can deliver institutional-grade analytics and sophisticated arbitrage modeling capabilities without incurring the massive overhead typically associated with such operations, thereby accelerating market expansion.

The Competitive Advantage of Speed in Real Estate Markets

Real estate markets are dynamic and operate on their own timelines, often exhibiting rapid shifts that do not wait for product development cycles to catch up. Short-term rental revenues, for instance, can fluctuate dramatically, often between 20% and 50% between peak and off-peak seasons. Supply levels are constantly in flux as new listings enter the market. Regulatory environments can evolve swiftly, and changes in interest rates can alter underwriting assumptions almost overnight.

In such a volatile environment, speed is not merely an aesthetic advantage; it is a strategic imperative. Companies that choose to build every data layer internally often spend many months stabilizing their data pipelines before they can even launch initial features. By the time their internal infrastructure is deemed ready, the market landscape may have already shifted significantly, diminishing the competitive edge of the product.

In contrast, companies that strategically integrate mature, externally managed data infrastructure gain a distinct advantage:

- Faster Feature Deployment: They can launch new revenue-generating features and product enhancements much more quickly.

- Quicker Market Feedback: Earlier product launches enable faster collection of user feedback, allowing for rapid iteration and improvement.

- Enhanced Competitive Positioning: Being first to market or having a more refined product due to faster iteration builds stronger brand recognition and market share.

- Agile Response to Market Changes: The ability to quickly integrate new data or adapt features allows companies to respond effectively to evolving market conditions and regulatory changes.

This compounding effect of speed translates directly into accelerated feedback loops, increased revenue generation, and a stronger market position. In the highly competitive PropTech arena, the difference between leading and lagging is frequently measured not by the novelty of an idea, but by the velocity of its execution.

The Modern Scaling Model: Builders vs. Orchestrators

As the PropTech sector continues to mature, a distinct pattern in scaling strategies is emerging. Companies tend to align with one of two primary models: "Builders" or "Orchestrators."

Builders aspire to own and control every layer of their technology stack. This involves ingesting listings directly, normalizing property data, modeling rental performance, calculating ROI, and meticulously maintaining all update cycles internally. While this approach offers the perceived benefit of absolute control, it invariably leads to significant infrastructure drag. This includes the ongoing burden of maintenance, the accumulation of technical debt, and a generally slower pace of feature development.

Orchestrators, on the other hand, adopt a different philosophy. They strategically integrate best-in-class data providers for foundational datasets, concentrating their internal engineering and product resources on areas of true differentiation. These typically include user experience, advanced automation, sophisticated AI logic, and innovative workflow design.

Builders often compete on the perceived completeness of their data control, while orchestrators compete on speed and focused innovation. In today’s increasingly data-dense real estate markets, differentiation rarely stems from the act of rebuilding commodity infrastructure. Instead, it arises from the intelligence and creativity with which that foundational infrastructure is applied. The PropTech companies achieving the most efficient and rapid scaling today predominantly resemble orchestrators. They effectively leverage strategic partnerships to accelerate their development cycles and build smarter, more focused products.

What an Infrastructure-Level Data Partnership Looks Like in Practice

In practical terms, a strategic data partnership involves integrating a unified, production-ready real estate API rather than attempting to build and manage fragmented data pipelines internally. Infrastructure-grade solutions, such as the Mashvisor API, consolidate vast amounts of property data, including MLS-style listings, STR performance metrics, long-term rental estimates, and sophisticated investment analytics. This data is delivered through structured REST endpoints specifically designed for PropTech applications.

Instead of expending considerable effort stitching together scraped calendar data, rental platforms, and public records, PropTech teams can access clean, machine-readable JSON outputs. These outputs can include critical metrics such as occupancy rates, ADR, revenue, RevPAR, detailed rental comparables, built-in ROI metrics, and even 36 months of historical performance data, all refreshed daily and covering all 50 U.S. states.

This approach effectively removes the substantial burden of multi-source aggregation, data normalization, and ongoing maintenance from the internal team. It liberates engineering resources, allowing them to concentrate on optimizing workflows, developing advanced AI models, and enhancing the overall user experience, all while relying on a validated, continuously updated data infrastructure operating seamlessly beneath the surface. This is the practical embodiment of a strategic data partnership.

Conclusion: The Evolving PropTech Playbook

In an industry as data-intensive as real estate, the instinct for absolute control over data can feel like a source of strength. However, practical experience increasingly demonstrates that strategic leverage is a more potent driver of success. Strategic data partnerships empower PropTech companies to scale efficiently without overextending their engineering teams or compromising the pace of product innovation. By integrating mature, structured datasets that span property intelligence, rental performance, and investment analytics, companies can strategically focus their internal efforts on what truly differentiates them: superior workflows, advanced automation, innovative AI applications, and exceptional user experiences.

The decision to build or partner is no longer a purely technical one; it has evolved into a critical strategic choice. Founders must move beyond asking solely if they can build a capability internally, and instead, critically assess whether building it moves them closer to their core competitive advantage. The PropTech companies experiencing the most rapid growth in today’s dynamic landscape are not necessarily those that collect the most raw data. Rather, they are the organizations that effectively connect the right data, through the most appropriate infrastructure, with the greatest speed and agility. This represents the new, modern playbook for sustained growth in the PropTech sector.

Who Benefits Most from Strategic Data Partnerships

This strategic approach to data infrastructure is particularly well-suited for PropTech teams that:

- Prioritize rapid product development and market entry.

- Operate with lean engineering teams and limited capital for infrastructure build-out.

- Focus on differentiating through user experience, workflow automation, or unique AI models.

- Require access to broad, reliable, and up-to-date real estate data across multiple markets.

- Aim to reduce operational overhead and technical debt associated with data management.

This model may not be the optimal choice for companies whose core business model is data licensing itself, or for those whose primary differentiator lies in developing proprietary, unique datasets that cannot be sourced externally. However, for a vast majority of PropTech ventures seeking to scale efficiently and compete effectively, strategic data partnerships offer a clear path to accelerated growth and sustained market leadership. These solutions are increasingly trusted by PropTech startups, established investment platforms, and sophisticated analytics tools deployed in production environments.