PropTech companies, by their very nature, are data-dependent enterprises. The bedrock of their innovations—from property listings and comparative market analyses to rental rates, occupancy trends, and investment returns—is built upon the foundation of structured and reliable information. This fundamental reliance on data naturally leads founders to a common instinct: to build their data pipelines internally, to own the entire technology stack, and to exert absolute control over their inputs. This impulse often stems from a desire for strategic defensibility and the creation of long-term competitive advantage. However, the practical realities of scaling in the PropTech sector are increasingly revealing a different, more nuanced truth.

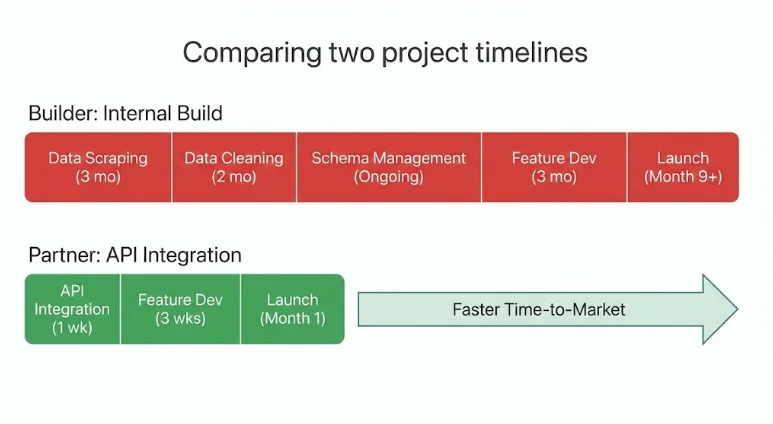

The journey of building an internal data infrastructure is fraught with challenges that can significantly impede a company’s growth trajectory. What appears strategic on paper can, in practice, consume months of invaluable engineering time before delivering any tangible product value. Data scrapers are notoriously fragile, prone to breaking with the slightest shift in website structures or data formats. Schemas, the organizational blueprints of data, are dynamic and subject to frequent changes. Integrating and normalizing data from multiple disparate sources demands constant, meticulous cleaning and validation. As a result, product roadmaps often stall, with engineering resources diverted to the quiet, behind-the-scenes expansion of infrastructure rather than the development of customer-facing features.

Meanwhile, competitors who have strategically integrated mature, external data systems are able to ship new products and features to market with significantly greater speed and agility. The companies that are currently achieving the most efficient scaling in the PropTech landscape are not attempting to build every dataset from scratch. Instead, they are making deliberate, strategic decisions about which aspects of their operations truly define their unique differentiation and are actively partnering with specialized providers for the rest. In the contemporary PropTech ecosystem, the paramount advantage is no longer absolute control, but rather strategic leverage.

Understanding Strategic Data Partnerships in PropTech

A strategic data partnership in PropTech can be defined as a long-term integration with a specialized, third-party data provider that delivers production-ready real estate data through robust APIs. This approach stands in stark contrast to the arduous and often inefficient process of building and maintaining internal data pipelines. Rather than dedicating significant resources to scraping, cleaning, and updating fragmented data sources, PropTech teams can seamlessly integrate meticulously structured datasets that are continuously refreshed and immediately ready for product integration. This paradigm shift allows companies to treat data as a fundamental utility, an essential infrastructure component, rather than a core area of product differentiation.

In practice, these strategic partnerships offer PropTech companies a multitude of tangible benefits. They accelerate time-to-market for new features and products by removing the significant bottleneck of data infrastructure development. They optimize engineering resources, allowing highly skilled developers to focus on core product innovation and user experience rather than the complex and ongoing maintenance of data pipelines. Furthermore, they ensure access to consistent, high-quality data, which is crucial for reliable analytics, accurate underwriting, and effective decision-making. Critically, a strategic data partnership is not an abdication of product ownership. Instead, it represents a deliberate decision to outsource the commodity aspects of data infrastructure, thereby enabling companies to intensify their focus on the unique value propositions that truly set them apart.

The Critical Juncture: Build vs. Partner

Every PropTech founder inevitably confronts a pivotal strategic decision: should they build a particular capability in-house, or should they seek a partnership for it? This decision transcends mere technical architecture; it profoundly influences a company’s speed of execution, its burn rate, and its long-term scalability.

When Building Internally Makes Strategic Sense

Building a capability internally is typically justified when that capability represents the company’s core differentiation. If a specific feature or data processing logic is central to the company’s value proposition, owning and controlling that logic inherently strengthens its defensibility. This often applies to proprietary underwriting algorithms, unique scoring models, or sophisticated workflow automation processes that are difficult for competitors to replicate.

Internal builds are most justifiable when they:

- Define the core intellectual property: The capability is the product itself, not merely a supporting function.

- Offer a unique competitive moat: The internal development provides a distinct advantage that cannot be easily sourced externally.

- Require highly specialized, in-house expertise: The complexity and domain-specific knowledge are not readily available from external vendors.

- Involve sensitive or proprietary data generation: The company’s competitive edge is tied to the unique way it collects or processes its own data.

In these specific scenarios, the feature or capability is intrinsically linked to the company’s identity and market position.

When Partnering Becomes the Strategic Imperative

Partnerships, conversely, become the more advantageous route when the data layer, while foundational, is not the primary differentiator. Real estate datasets are characterized by their continuous need for aggregation, cleaning, validation, and daily updates. The act of attempting to reconstruct this complex infrastructure internally often results in significant delays to core product development timelines.

Partnering becomes a strategic imperative when:

- The data is a commodity input: The value lies not in possessing the data itself, but in how it is used to build a unique product.

- Internal build timelines are prohibitive: The time and resources required to build and maintain the data infrastructure would significantly delay market entry or feature release.

- External providers offer superior scale and expertise: Specialized data vendors have already invested heavily in building robust, comprehensive, and continuously updated data systems.

- Cost efficiency is paramount: Outsourcing data infrastructure can be significantly more cost-effective than building and maintaining it internally, especially for early-stage and growth-stage companies.

In these situations, forming an integration is not a shortcut; it is a strategic allocation of precious resources. The most effective PropTech teams are those that judiciously build what makes them uniquely different and integrate what makes them scalable.

Strategic Data Partnerships as a Capital Allocation Strategy

The decision to engage in strategic data partnerships is not merely a technical one; it is fundamentally a capital allocation decision. In the critical early and growth stages of PropTech companies, engineering time stands out as the most expensive and finite resource. Every month spent meticulously building internal data pipelines is a month not spent enhancing product experience, developing innovative automation, or solidifying core differentiation.

The undertaking of reconstructing nationwide real estate data infrastructure internally is a monumental task. It involves aggregating MLS records, harmonizing property attributes across disparate sources, modeling rental income potential, cleaning and validating short-term rental signals, rigorously verifying occupancy trends, calculating complex ROI metrics, and ensuring that all this information is refreshed daily. This work is inherently complex, demands continuous effort, and, crucially, often remains largely invisible to the end users of the product.

When companies instead choose to integrate mature real estate APIs from specialized providers, they gain immediate access to a suite of invaluable resources. This includes comprehensive property data, accurate listing information, historical performance metrics, neighborhood benchmarks, and sophisticated investment analytics. All of this is delivered through structured, machine-readable endpoints, designed for seamless integration into PropTech applications. The outcome is an immediate acceleration of development cycles. Teams can significantly reduce their infrastructure overhead, preserve their critical engineering focus on value-generating features, and move with greater speed toward revenue-generating aspects of their business. For venture-backed PropTech companies, in particular, these partnerships are not about outsourcing capability; they are about strategically directing capital toward innovation and allowing specialized data providers to manage the complex, underlying data infrastructure.

Applied Examples Across PropTech Verticals

The strategic value of embracing data partnerships becomes particularly evident when examined across various PropTech categories. While business models may differ, the underlying challenge of scaling often remains consistent: the transformation of fragmented real estate data into reliable, production-ready intelligence.

Marketplaces: The Need for Speed and Breadth

Property marketplaces face the immense challenge of providing accurate listings, comprehensive pricing history, granular neighborhood benchmarks, reliable rental comparables, and insightful investment indicators across numerous diverse cities. The risk of attempting to build this internally is substantial. Ingesting MLS data, normalizing property attributes, and developing sophisticated performance modeling capabilities require constant updates and rigorous multi-source validation. Expanding market coverage incrementally, city by city, significantly slows growth and introduces inevitable data inconsistencies.

A strategic partnership, however, allows these marketplaces to integrate structured property endpoints, powerful search APIs, and detailed neighborhood analytics with nationwide coverage. This approach liberates them from the burden of building infrastructure that does not inherently differentiate their core offering. The scaling outcome is clear: engineering resources can be redirected to focus on critical drivers of marketplace value, such as enhancing liquidity, refining user experience, and optimizing transaction workflows.

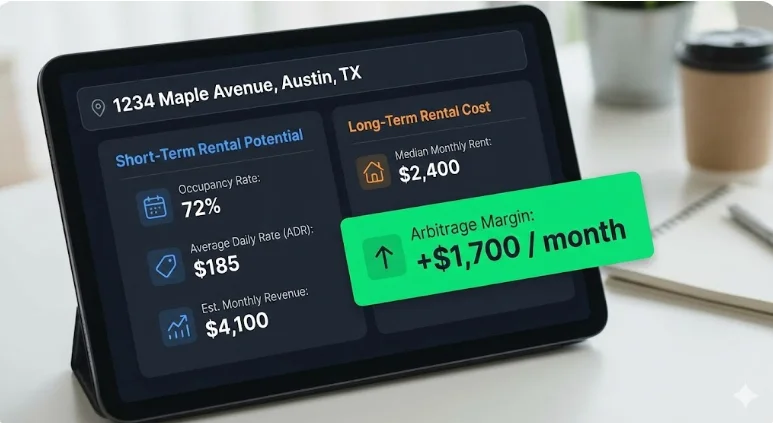

Consider a real-world example: a PropTech startup developing a tool for underwriting short-term rentals (STRs). By leveraging an existing, mature real estate API, this startup was able to launch production-grade analytics in under two weeks. Instead of spending months on the laborious task of normalizing listings, rental performance data, and ROI metrics, the team was able to concentrate on user interface design, workflow optimization, and the nuanced logic of deal evaluation. This agility enabled them to rapidly test their product-market fit, onboard early adopters, and iterate quickly, all without the immediate need to build a dedicated data engineering team.

CRMs and Deal Management Platforms: Enhancing Workflow and Stickiness

Customer Relationship Management (CRM) and deal management platforms often face a significant challenge: users frequently exit their platforms to validate financial assumptions elsewhere, leading to fragmented workflows and a diminished sense of platform stickiness. The risk of attempting to build an in-house underwriting layer is substantial, requiring the intricate merging of diverse datasets including property data, short-term rental metrics, long-term rental estimates, historical performance arrays, and ROI calculations. Each of these datasets necessitates meticulous cleaning and daily updates.

By embedding unified real estate APIs directly within their platforms, these CRMs can enable deal validation to occur seamlessly within the existing workflow. This strategic integration drastically reduces the time to decision for users, significantly improves user retention, and effectively transforms the CRM from a simple workflow tool into a powerful decision-making engine.

AI Underwriting and Analytics Tools: Fueling Machine Learning with Reliable Data

Machine learning systems, particularly those in underwriting and analytics, are critically dependent on structured, time-series datasets that possess consistent schemas and reliable inputs. The inherent risks of building these data pipelines internally are significant. Scraped data often lacks essential normalization, robust sample size validation, or effective fallback logic. Inconsistent inputs, in turn, inevitably lead to unstable and unreliable outputs, undermining the efficacy of the AI models.

Conversely, integrating APIs that deliver comprehensive data, such as 36 months of monthly performance data, pre-built investment metrics, and statistical confidence indicators, enables cleaner model training and a faster path to production deployment. This strategic approach allows AI tools to transition from prototype to production more rapidly, with a substantially reduced risk of modeling errors stemming from data quality issues.

Investment and Portfolio Platforms: Delivering Institutional-Grade Insights

Institutional-grade underwriting, whether for investment or portfolio management, demands access to a wide array of data points: occupancy rates, Average Daily Rate (ADR), Revenue Per Available Room (RevPAR), rental comparables, detailed expense modeling, and projected ROI across multiple markets. Furthermore, identifying highly profitable rental arbitrage opportunities necessitates a sophisticated evaluation of both short-term and long-term rental potential side-by-side.

The risk of attempting to reconstruct this nationwide coverage with daily refresh cycles internally is not only capital-intensive but also exceedingly slow. Building separate data pipelines for short-term and long-term rental data effectively doubles the infrastructure burden. By leveraging harmonized datasets with unified schemas and pre-modeled financial indicators through a strategic partnership, platforms can eliminate this significant infrastructure drag. Partnering for an API that provides access to both STR and LTR data unlocks immediate arbitrage analysis capabilities without the need for managing multiple, competing data subscriptions and their associated integration complexities.

The Unrivaled Competitive Advantage of Speed

In the dynamic and often volatile real estate markets, the pace of change is relentless, and markets do not wait for product roadmaps to catch up. Short-term rental revenues can fluctuate dramatically, often by 20% to 50% between peak and off-peak seasons. Supply levels are constantly shifting as new listings enter the market. Regulatory environments are evolving, and interest rate changes can fundamentally alter underwriting assumptions almost overnight.

In such a fluid environment, speed is not merely a superficial enhancement; it is a fundamental strategic imperative. Companies that commit to building every data layer internally often find themselves spending months merely stabilizing their data pipelines before they can even contemplate launching new features. By the time their internal infrastructure is deemed ready, the market landscape may have already shifted significantly.

In stark contrast, companies that strategically integrate mature, external data infrastructure are empowered to:

- Launch features and products significantly faster: They bypass the lengthy data pipeline development cycle.

- Respond rapidly to market shifts: They can quickly incorporate new data points or adapt to changing market conditions.

- Iterate based on real-time feedback: Faster deployment allows for quicker collection of user feedback and market insights, driving continuous improvement.

- Capture market opportunities before competitors: Speed translates directly into a first-mover advantage in many cases.

This compounding effect of speed is profound. Shipping earlier accelerates the entire cycle of feedback, revenue generation, and brand positioning. In the increasingly competitive PropTech categories, the difference between leading the market and lagging behind is often measured not in the brilliance of ideas, but in the velocity of their execution.

The Modern Scaling Model: Builders vs. Orchestrators

As the PropTech industry matures, a discernible pattern is emerging in how companies approach scaling. They tend to fall into one of two distinct models: builders or orchestrators.

Builders are those companies that strive to own and control every single layer of their technology stack. They meticulously ingest listings, normalize property data, model rental performance, calculate ROI, and diligently maintain internal update cycles for all their data needs. While this approach offers the illusion of complete control, it invariably creates significant infrastructure drag. This drag manifests as ongoing maintenance burdens, the accumulation of technical debt, and a generally slower pace of feature development and innovation.

Orchestrators, on the other hand, adopt a fundamentally different strategy. They proactively integrate best-in-class data providers for their foundational datasets and concentrate their internal engineering resources on areas of true differentiation. This includes focusing on user experience design, advanced automation, sophisticated AI logic, and innovative workflow development. Builders compete primarily on the completeness of their data collection and processing capabilities. Orchestrators, however, compete on speed, focus, and the intelligent application of data.

In markets that are increasingly data-dense, true differentiation rarely stems from the act of rebuilding commodity infrastructure. Instead, it arises from the intelligent and innovative ways that this readily available infrastructure is applied. The PropTech companies that are currently scaling most efficiently and effectively are those that operate as orchestrators. They strategically leverage partnerships to accelerate their progress and build smarter, more impactful products.

What an Infrastructure-Level Data Partnership Looks Like in Practice

In practical terms, a strategic data partnership signifies the integration of a unified, production-ready real estate API, rather than the arduous process of building fragmented internal data pipelines. Infrastructure-grade solutions, such as the Mashvisor API, exemplify this approach by consolidating a vast array of property data. This includes comprehensive property intelligence, MLS-style listings, detailed short-term rental performance metrics, accurate long-term rental estimates, and sophisticated investment analytics. All of this is presented through structured REST endpoints, meticulously designed for seamless integration into PropTech applications.

Instead of laboriously stitching together scraped calendar data, rental platforms, and public records, PropTech teams can access clean, machine-readable JSON data. This data includes critical metrics like occupancy rates, ADR, revenue, RevPAR, rental comparables, pre-built ROI metrics, and even 36 months of historical performance arrays, all refreshed daily and covering all 50 US states. This integrated approach effectively removes the substantial burden of multi-source aggregation, complex normalization processes, and continuous maintenance. It liberates engineering teams to concentrate on workflow optimization, advanced automation, sophisticated AI models, and the enhancement of user experience, all while relying on a validated, continuously updated data infrastructure that operates reliably beneath the surface. This is the tangible reality of a strategic data partnership in execution.

Conclusion: The Evolving PropTech Playbook

In the data-driven landscape of the real estate industry, the instinct for control can often be mistaken for strength. However, the prevailing evidence suggests that strategic leverage is a far more potent force for sustainable growth. Strategic data partnerships empower PropTech companies to scale effectively without overextending their valuable engineering teams or inadvertently delaying crucial product innovation. By integrating mature, structured datasets—spanning property intelligence, rental performance, and investment analytics—companies can strategically reallocate their internal resources to focus on the elements that truly differentiate them: refined workflows, advanced automation, cutting-edge AI capabilities, and an exceptional user experience.

The decision to build or partner is no longer a purely technical consideration; it is a deeply strategic one. Founders must critically assess not just whether they can build a particular capability, but more importantly, whether the act of building it directly moves them closer to realizing their core competitive advantage. The PropTech companies that are achieving the most rapid and sustainable scaling in today’s dynamic market are not necessarily those that collect the most raw data. Instead, they are the organizations that excel at connecting the right data, through the right infrastructure, with the utmost speed. This represents the definitive modern playbook for achieving exponential growth in the PropTech sector.

Who Benefits Most from Strategic Data Partnerships?

This strategic approach to data infrastructure is most effectively utilized by PropTech teams that:

- Prioritize rapid product development and market entry.

- Seek to optimize engineering resources for core differentiation.

- Require access to comprehensive, accurate, and continuously updated real estate data without the burden of infrastructure management.

- Operate in competitive markets where speed and agility are paramount.

- Are focused on leveraging data to enhance user experience and automate complex processes.

This model may not be the optimal choice for companies whose primary business model revolves around data licensing or for those whose core differentiator is the creation of proprietary, unique datasets. However, for the vast majority of PropTech innovators, integrating infrastructure-level data solutions is proving to be a critical enabler of scalable growth. This approach is increasingly trusted by PropTech startups, established investment platforms, and advanced analytics tools operating in demanding production environments.

Exploring the Build vs. Buy Decision for Your Data Stack?

For PropTech leaders currently evaluating the complex decision of whether to build their own real estate data infrastructure or to integrate a robust API partner, a thorough architectural and roadmap review can be invaluable. Engaging with experts who understand the nuances of both approaches can help clarify the optimal path forward. A short introductory call with a specialized data team can provide a platform to pressure-test your current architecture, discuss technical requirements, and align on your long-term scaling goals, ensuring that your data strategy is a powerful engine for growth, not a bottleneck.