The real estate technology (PropTech) sector, fundamentally built on the bedrock of data, is undergoing a significant strategic evolution. For years, the prevailing instinct for founders aiming to scale has been to establish and maintain internal data pipelines, believing that owning the entire data stack—from listings and comparables to rental rates and occupancy trends—was the key to defensibility and long-term advantage. This approach, while seemingly logical, often leads to prolonged engineering cycles, costly infrastructure development, and a slower pace of innovation, ultimately hindering growth. In contrast, the PropTech companies achieving the most efficient scaling today are strategically choosing to partner for foundational data needs, focusing their internal resources on core differentiators. This shift underscores a crucial realization: in modern PropTech, true competitive advantage lies not in absolute control, but in intelligent leverage.

The Hidden Costs of Internal Data Pipelines

The initial allure of building an in-house data infrastructure is understandable. Founders envision a robust, proprietary system that offers complete control over inputs and outputs, potentially creating a significant moat against competitors. However, the practical realities of this endeavor often fall short of the theoretical ideal. Developing comprehensive data pipelines is an arduous and time-consuming process. Engineering teams can spend months, even years, meticulously scraping data from disparate sources, developing complex algorithms for cleaning and normalization, and building robust systems to manage constantly shifting schemas and breaking scrapers.

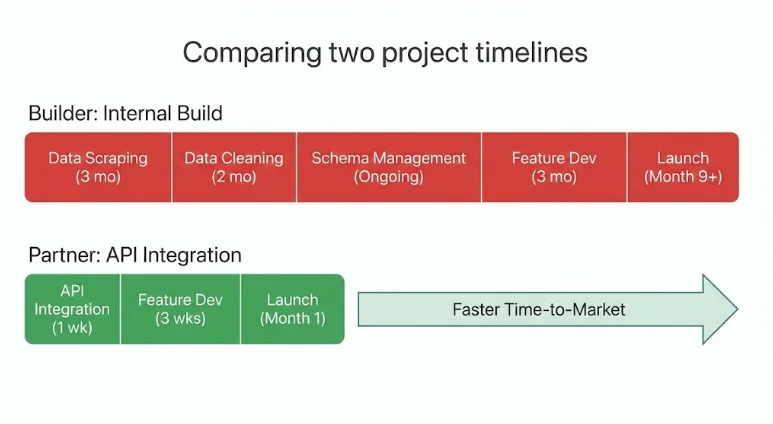

During this extended development phase, the visible value delivered to the end-user is minimal. Product roadmaps are frequently derailed as engineering resources are diverted to address the ever-expanding needs of data infrastructure. Meanwhile, competitors who have opted for more streamlined data integration strategies are already shipping advanced features and gaining market traction. This creates a critical disadvantage, particularly in a fast-moving industry like real estate where market dynamics can shift rapidly.

A prime example of this challenge can be observed in the early days of many PropTech startups. Consider a company focused on developing an innovative property valuation tool. The initial vision might involve ingesting MLS data, public records, and even social media sentiment to create a unique scoring model. While the aspiration is noble, the technical hurdles are immense. Reconciling inconsistent property identifiers across different data sources, ensuring data freshness for a dynamic market, and building reliable models that account for local market nuances can consume a disproportionate amount of early-stage capital and talent. This often leads to a scenario where the core product—the valuation tool—remains in beta for an extended period, while the underlying data infrastructure becomes a complex, costly, and often brittle entity in itself.

Strategic Data Partnerships: A New Paradigm for Scale

The most successful PropTech companies today are those that have embraced a different philosophy: strategic data partnerships. This approach involves integrating with specialized data providers who offer production-ready real estate data via APIs. Instead of dedicating significant engineering effort to building and maintaining internal data pipelines, these companies leverage these external integrations as a foundational layer, treating data as a utility rather than a core competency requiring in-house development.

A strategic data partnership is not about relinquishing control of one’s product. Rather, it’s about strategically outsourcing the acquisition and maintenance of commoditized data infrastructure to free up internal resources to focus on what truly differentiates the company. This could be anything from a superior user experience and intuitive workflow automation to advanced artificial intelligence capabilities or unique business logic.

The benefits of this model are multifaceted. By integrating pre-structured, continuously refreshed datasets, PropTech teams can significantly accelerate their product development cycles. They avoid the months of engineering time typically consumed by scraping, cleaning, and updating fragmented sources. This allows them to bring innovative features to market faster, respond more agilely to market changes, and ultimately, outpace competitors who are bogged down by internal data infrastructure challenges.

The Build vs. Partner Decision: A Strategic Crossroads

Every PropTech founder inevitably faces the critical decision: should they build a specific capability internally or seek a strategic partnership? This choice has profound implications, influencing not only the product’s architecture but also its speed to market, financial burn rate, and long-term scalability.

When Building Internally Makes Strategic Sense

Building internally is the optimal strategy when the capability in question forms the very core of the company’s unique value proposition. If a feature or data process is central to the product’s differentiation and is not easily replicable by competitors, then owning that logic can indeed strengthen defensibility. This is particularly true for proprietary underwriting algorithms, novel scoring models, or specialized workflow automation that provides a distinct competitive edge.

Internal builds are justified when they:

- Define the core competitive advantage: The feature is the primary reason customers choose the product.

- Are impossible to source externally: The required data or functionality is highly specialized and not available from third-party providers.

- Involve highly sensitive or proprietary data processing: The nature of the data requires absolute control over its handling and security.

In these scenarios, the feature or data capability is intrinsically linked to the company’s identity and market position.

When Partnering Offers a Strategic Advantage

Partnerships become the more strategic option when the data layer, while foundational, is not a primary differentiator. Real estate data is characterized by its complexity, fragmentation, and the constant need for aggregation, cleaning, validation, and daily updates. Replicating this infrastructure internally often diverts critical resources from core product development and innovation.

Partnering becomes a strategic imperative when:

- The data is a commodity for the specific use case: The data itself is widely available but requires significant effort to aggregate and maintain.

- Speed to market is critical: Integrating with a mature data provider allows for rapid deployment of features dependent on that data.

- Capital and engineering resources are constrained: Building and maintaining data infrastructure is resource-intensive and can strain a startup’s budget and talent pool.

- Focus on core differentiation is paramount: The company’s unique value lies in its application of data, not in its collection and normalization.

In these situations, integration is not a shortcut but a deliberate and intelligent allocation of resources, allowing the company to leverage external expertise and infrastructure to achieve scalability.

Partnerships as a Capital Allocation Strategy

The decision to partner for data infrastructure is fundamentally a capital allocation strategy. In early-stage and growth-stage PropTech companies, engineering time is arguably the most valuable and expensive resource. Every month spent building and maintaining internal data pipelines is a month that could have been dedicated to enhancing the product experience, developing innovative automation features, or solidifying core differentiators.

The sheer scale of reconstructing nationwide real estate data infrastructure internally is immense. It involves aggregating millions of MLS records, harmonizing disparate property attributes, modeling complex rental income streams, cleaning and validating short-term rental signals, accurately calculating occupancy trends, and continuously refreshing all these metrics on a daily basis. This is a continuous, labor-intensive, and largely invisible process to the end-user, yet it is essential for any data-driven PropTech offering.

By integrating with mature real estate data APIs, companies gain immediate access to a wealth of pre-processed and structured information. This typically includes comprehensive property listings, historical transaction data, detailed rental comparables, neighborhood analytics, short-term rental performance metrics (occupancy, ADR, RevPAR), and key investment indicators like ROI projections. This data is delivered through structured, machine-readable endpoints, ready for immediate integration into product features.

The result is an immediate acceleration of development and deployment. Teams significantly reduce their infrastructure overhead, preserve precious engineering focus for high-impact initiatives, and move much more rapidly toward revenue-generating features. For venture-backed PropTech companies, in particular, these partnerships are not about outsourcing capability; they are about directing capital toward true innovation and allowing specialized data providers to shoulder the burden of data acquisition and maintenance.

Applied Examples Across PropTech Verticals

The strategic value of data partnerships becomes particularly evident when examined across various PropTech verticals:

Marketplaces

Challenge: Property marketplaces require accurate, up-to-date listings, pricing histories, neighborhood benchmarks, rental comparables, and investment indicators across numerous markets.

Risk of Building: In-house ingestion and normalization of MLS data, property attribute harmonization, and performance modeling demand constant updates and multi-source validation. Expanding market coverage incrementally slows growth and introduces data inconsistencies.

Strategic Partnership: Integrating structured property endpoints, search APIs, and nationwide neighborhood analytics from a specialized provider allows marketplaces to bypass the construction of non-differentiating infrastructure.

Scaling Outcome: Engineering resources can be directed towards enhancing liquidity, improving user experience, and optimizing transaction workflows—the true drivers of marketplace value.

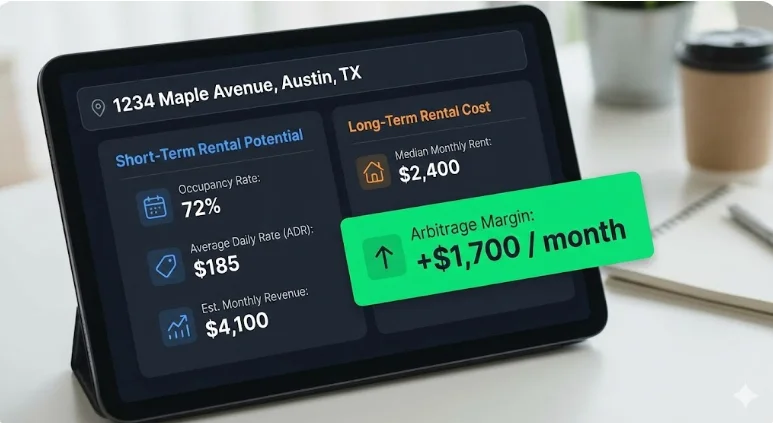

Example: Rapid Product Launch for an STR Underwriting Tool

A PropTech startup developing a specialized tool for underwriting short-term rental (STR) properties faced a critical time-to-market challenge. By leveraging an existing real estate API that provided comprehensive STR performance data, including occupancy rates, average daily rates (ADR), and revenue per available room (RevPAR), the company was able to launch production-grade analytics in under two weeks. Instead of spending months painstakingly normalizing listings, rental performance data, and ROI calculations from various sources, the team focused on refining the user interface, optimizing workflows, and developing sophisticated deal evaluation logic. This agile approach allowed them to quickly test product-market fit, onboard early adopters, and iterate rapidly, all without the immediate need to build a dedicated data engineering team.

CRMs & Deal Management Platforms

Challenge: Users often exit CRM platforms to validate financial assumptions elsewhere, leading to fragmented workflows and reduced platform stickiness.

Risk of Building: Developing an in-house underwriting layer necessitates merging property data, STR metrics, long-term rental estimates, historical performance data, and ROI calculations. Each of these datasets requires meticulous cleaning and daily updates.

Strategic Partnership: Embedding unified real estate APIs directly within the CRM platform allows for seamless deal validation, enhancing user experience and retention.

Scaling Outcome: The time required for deal decision-making is significantly reduced. User retention improves as the CRM evolves from a simple workflow tool into a comprehensive decision-support engine.

AI Underwriting & Analytics Tools

Challenge: Machine learning systems are heavily reliant on structured, time-series datasets with consistent schemas and reliable inputs to produce accurate and stable outputs.

Risk of Building: Data acquired through scraping often lacks essential normalization, sample size validation, or fallback logic, leading to inconsistent inputs and unreliable model performance.

Strategic Partnership: Integrating APIs that deliver consistent, long-term performance data (e.g., 36 months of monthly performance metrics), pre-calculated investment metrics, and statistical confidence indicators enables more robust model training and faster deployment cycles.

Scaling Outcome: AI-driven underwriting and analytics tools can transition from prototype to production more rapidly, with a reduced risk of modeling instability due to data quality issues.

Investment & Portfolio Platforms

Challenge: Institutional-grade underwriting demands comprehensive data on occupancy, ADR, RevPAR, rental comparables, expense modeling, and ROI projections across multiple markets. Furthermore, identifying profitable rental arbitrage opportunities requires evaluating both short-term and long-term rental potential concurrently.

Risk of Building: Recreating nationwide data coverage with daily refresh cycles is capital-intensive and time-consuming. Developing separate pipelines for short-term and long-term rental data effectively doubles the infrastructure burden.

Strategic Partnership: Leveraging harmonized datasets with unified schemas and pre-modeled financial indicators eliminates significant infrastructure drag. Partnering for an API that provides both STR and LTR data unlocks immediate arbitrage analysis without the need for managing multiple, competing data subscriptions.

Scaling Outcome: Investment platforms can deliver sophisticated institutional-grade analytics and deep arbitrage modeling capabilities without the prohibitive overhead of building and maintaining such complex data infrastructure, thereby accelerating market expansion.

The Competitive Advantage of Velocity

In the dynamic real estate market, speed is not merely a desirable attribute; it is a strategic imperative. Short-term rental revenues can fluctuate significantly due to seasonal demand, supply levels evolve as new properties enter the market, regulatory landscapes are subject to change, and interest rates can alter underwriting assumptions almost overnight.

Companies that opt to build every data layer internally often face prolonged periods of pipeline stabilization before any features can be launched. By the time their infrastructure is deemed ready, the market may have already shifted, rendering their initial efforts less impactful.

In contrast, companies that strategically integrate mature data infrastructure can achieve several key advantages:

- Faster Feature Deployment: Launching revenue-generating features in weeks rather than months.

- Agile Market Response: Adapting quickly to changing market conditions and competitive pressures.

- Accelerated Learning Cycles: Gathering user feedback and iterating on product development at a significantly higher velocity.

This compounding effect of speed—shipping earlier, gaining faster feedback, accelerating revenue generation, and solidifying brand positioning—can be the decisive factor in establishing market leadership. In highly competitive PropTech segments, the difference between leading and lagging is often measured not by the novelty of an idea, but by the velocity of its execution.

The Modern Scaling Model: Builders vs. Orchestrators

As the PropTech industry matures, a clear dichotomy in scaling models is emerging: the "Builders" and the "Orchestrators."

Builders adopt a philosophy of owning every layer of their technology stack. They meticulously ingest listings, normalize property data, model rental performance, calculate ROI, and manage all update cycles internally. While this approach offers a high degree of control, it inherently creates significant infrastructure drag. This includes the ongoing burden of maintenance, the accumulation of technical debt, and a naturally slower pace of feature velocity.

Orchestrators, on the other hand, embrace a more collaborative and focused approach. They integrate best-in-class data providers for foundational datasets, thereby treating this data as an essential utility. Their internal resources are then strategically concentrated on developing and enhancing their core differentiators, such as user experience, advanced automation, sophisticated AI logic, and innovative workflow solutions.

Builders often compete on the perceived completeness of their proprietary systems. Orchestrators, however, compete on speed, focus, and the intelligent application of readily available data. In today’s data-dense real estate markets, true differentiation rarely stems from reinventing commodity infrastructure. Instead, it arises from how intelligently that infrastructure is leveraged to solve complex problems and deliver exceptional value to users. The PropTech companies achieving the most efficient and sustainable growth today predominantly align with the orchestrator model, using partnerships to accelerate their development and build smarter, more targeted solutions.

What an Infrastructure-Level Data Partnership Looks Like in Practice

In practical terms, a strategic data partnership translates to integrating a unified, production-ready real estate API rather than attempting to build fragmented, internal data pipelines. Infrastructure-grade solutions, such as the Mashvisor API, consolidate critical property data—including MLS-style listings, short-term rental performance metrics (occupancy, ADR, RevPAR), long-term rental estimates, and comprehensive investment analytics—into structured REST endpoints specifically designed for PropTech applications.

Instead of laboriously stitching together scraped calendar data, rental platforms, and public records, PropTech teams can access clean, machine-readable JSON data that includes detailed performance metrics, built-in ROI calculations, and up to 36 months of historical performance data, all refreshed daily and covering all 50 U.S. states.

This approach effectively removes the significant burden of multi-source aggregation, data normalization, and ongoing maintenance. It liberates engineering teams to concentrate their efforts on developing intuitive workflows, implementing sophisticated automation, building advanced AI models, and enhancing the overall user experience, all while relying on a validated and continuously updated data infrastructure operating seamlessly in the background. This is the tangible outcome of a strategic data partnership executed effectively.

Conclusion: The Evolving PropTech Playbook

In the data-intensive world of real estate technology, the initial impulse towards complete control of data infrastructure can feel like a strategic advantage. However, the practical reality demonstrates that leverage, intelligently applied, is a far more potent driver of growth and success. Strategic data partnerships empower PropTech companies to scale efficiently without overextending their engineering teams or compromising product innovation timelines. By integrating mature, structured datasets that span property intelligence, rental performance, and investment analytics, companies can strategically allocate their internal resources to what truly sets them apart: workflow optimization, automation, AI development, and user experience design.

The decision to build or partner is no longer merely a technical consideration; it is a fundamental strategic choice. Founders must critically assess not only whether they can build a specific data capability but, more importantly, whether building it actively moves them closer to their core competitive advantage. The PropTech companies experiencing the most rapid growth in today’s landscape are not necessarily those that amass the largest volumes of raw data. Instead, they are the organizations that excel at connecting the right data, through the optimal infrastructure, with unparalleled speed and precision. This strategic integration of data and technology represents the modern playbook for achieving sustainable growth in the PropTech sector.

Who Strategic Data Partnerships Are Best For

This model of leveraging external data infrastructure is particularly well-suited for PropTech teams that:

- Prioritize rapid product development and market entry.

- Seek to focus engineering talent on core product differentiation and innovation.

- Operate with resource constraints typical of early-stage or growth-stage companies.

- Require access to extensive, high-quality real estate data without the overhead of building and maintaining it.

- Are looking to enhance their existing platforms with advanced data analytics and insights.

This approach may not be the ideal fit for companies whose primary business model revolves around data licensing or those whose core differentiator is the creation of proprietary, unique datasets. However, for the vast majority of PropTech firms aiming for scalable growth, strategic data partnerships offer a clear path to achieving their objectives. Many leading PropTech startups, investment platforms, and analytics tools already rely on such partnerships for their production environments.

Exploring Build vs. Buy for Your Data Stack?

For PropTech leaders currently evaluating the complex decision of whether to build their own real estate data infrastructure or integrate with an API partner, a structured assessment can be invaluable. Engaging with experts to pressure-test architectural choices and long-term roadmaps can provide crucial insights. Booking a brief introductory call with a specialized data API provider can facilitate a thorough discussion of specific use cases, technical requirements, and ambitious scaling goals, helping to clarify the most effective path forward.